Third Layer:

Deep Learning

The powerhouse behind today's AI revolution. Multi-layered neural networks that loosely mimic how our brains process information.

Interactive Python Examples · Live Code Execution

16. Why "Deep"? Layers Build Understanding

"Deep" refers to multiple layers of processing. Like building a house: foundation first, then walls, then roof. Each layer builds on the previous one.

Layer 1 (Low-level)

Detects simple features: edges, corners, basic shapes.

Layer 2 (Mid-level)

Combines basic features into more complex patterns: eyes, ears, whiskers.

Layer 3 (High-level)

Synthesizes everything into the high-level concept: "This is a cat."

16a. Perceptron: Beach Decision

Sunday evening. Suppose you decide to go to the beach to relax. Your brain analyzes three main factors to make this decision:

Weather (x₁)

Is it nice weather outside, or raining?

x₁ = 1 (Nice weather)

x₁ = 0 (Rain)

Friend (x₂)

Does your friend want to come with you?

x₂ = 1 (Will come)

x₂ = 0 (Won't come)

Transport (x₃)

Is public transport (bus/train) nearby?

x₃ = 1 (Available)

x₃ = 0 (Not available)

These three factors can be given to our perceptron as inputs through binary (0 or 1) variables x₁, x₂, x₃.

16b. Weights: Assigning Importance

Each input gets a weight that reflects how much it matters to your decision. Let's say weather is your top priority:

The Decision Formula

w₁ = 6 → Weather

w₂ = 2 → Friend

w₃ = 2 → Transport

Threshold = 5

Decision Method

∑ = (w₁×x₁) + (w₂×x₂) + (w₃×x₃)

∑ ≥ 5 → 1 (Go)

∑ < 5 → 0 (Don't go)

16c. Seeing It in Action

Let's run through two scenarios and watch the perceptron decide:

Scenario 1: Nice Weather ☀️

x₁ = 1 (Nice weather)

x₂ = 0 (No friend)

x₃ = 0 (No transport)

∑ = (6×1) + (2×0) + (2×0) = 6

6 > 5 → Output: 1

✓ Go to the beach!

Scenario 2: Heavy Rain 🌧️

x₁ = 0 (Rain)

x₂ = 1 (Friend coming)

x₃ = 1 (Transport available)

∑ = (6×0) + (2×1) + (2×1) = 4

4 < 5 → Output: 0

✗ Stay home!

The Big Idea: By tuning weights and thresholds, we can completely reshape how a machine makes decisions. This is the foundation of neural networks!

17. Key Deep Learning Architectures

CNN (Convolutional Neural Network)

"Computer Vision Specialist"

Excels at understanding images and video. Inspired by how our visual cortex processes what we see.

Applications: Face ID, medical imaging (detecting tumors), self-driving car vision.

RNN (Recurrent Neural Network)

"Sequential Data Expert"

Designed for data with order and context: language, speech, time series. Has "memory" of what came before.

Applications: Siri and Alexa, real-time translation, stock prediction.

18. Why Now? The Perfect Storm

Neural networks have been around since the 1950s. So why did deep learning only explode in the 2010s? Three things converged:

- Data Deluge: The internet gave us massive labeled datasets. ImageNet alone contains 14 million labeled images.

- GPU Revolution: Graphics cards built for gaming turned out to be perfect for neural network math. What took weeks now takes hours.

- Algorithmic Breakthroughs: Better training techniques (like improved backpropagation and ReLU activations) made deep networks actually trainable.

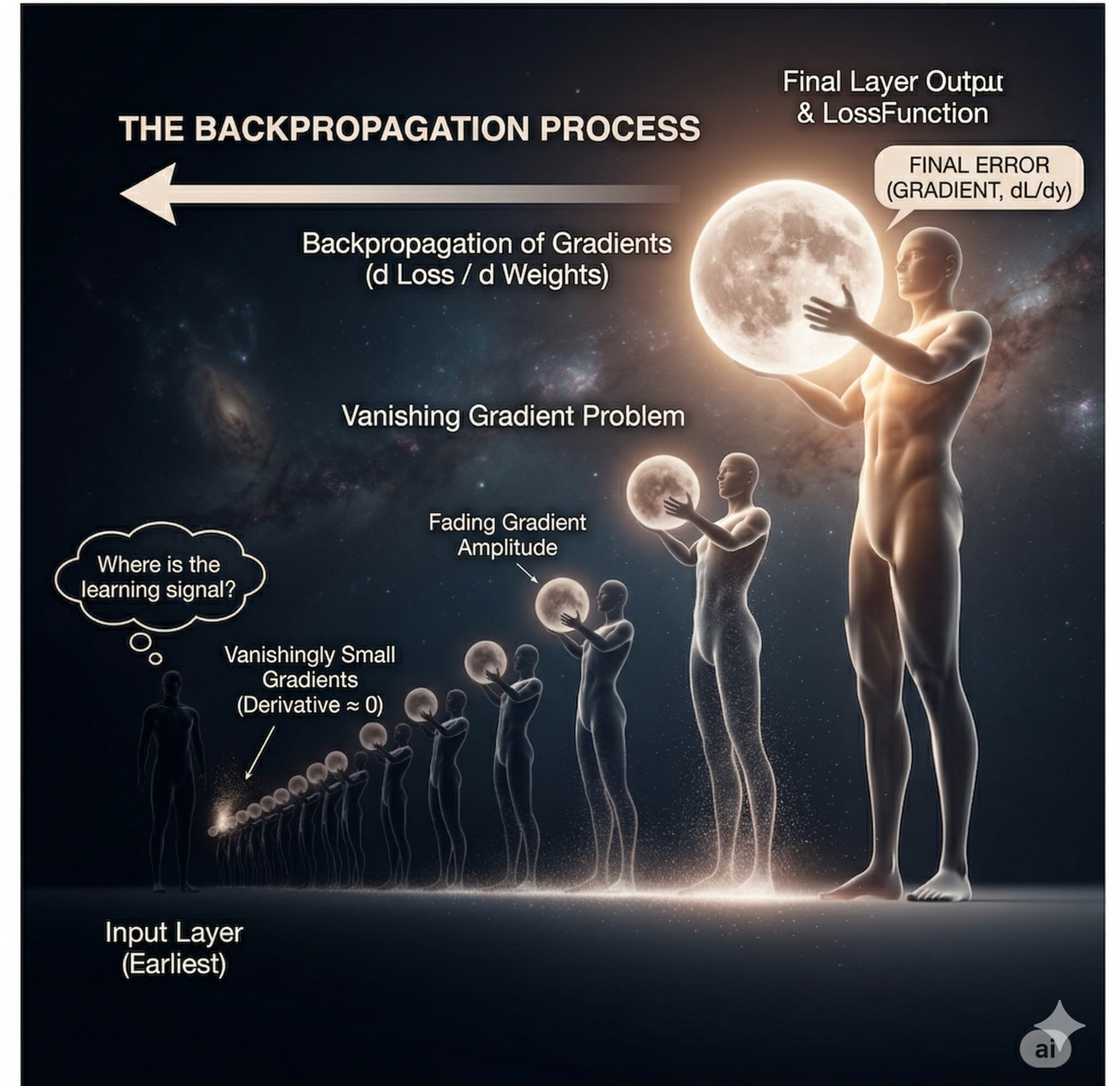

19. Vanishing Gradient Problem

The "Telephone Game" Problem

During training, the learning signal (gradient) must travel backward through all layers. But with each layer, it gets weaker and weaker until it vanishes into nothing.

Remember the "telephone game" where a message gets garbled as it passes through many people? Same problem here!

This roadblock stalled deep learning for decades. Modern fixes like ReLU activations and LSTM cells finally cracked it.

The Innermost Layer:

Generative AI

From recognition to creation. Instead of asking "What is this?", generative AI asks "What if I made something new?"

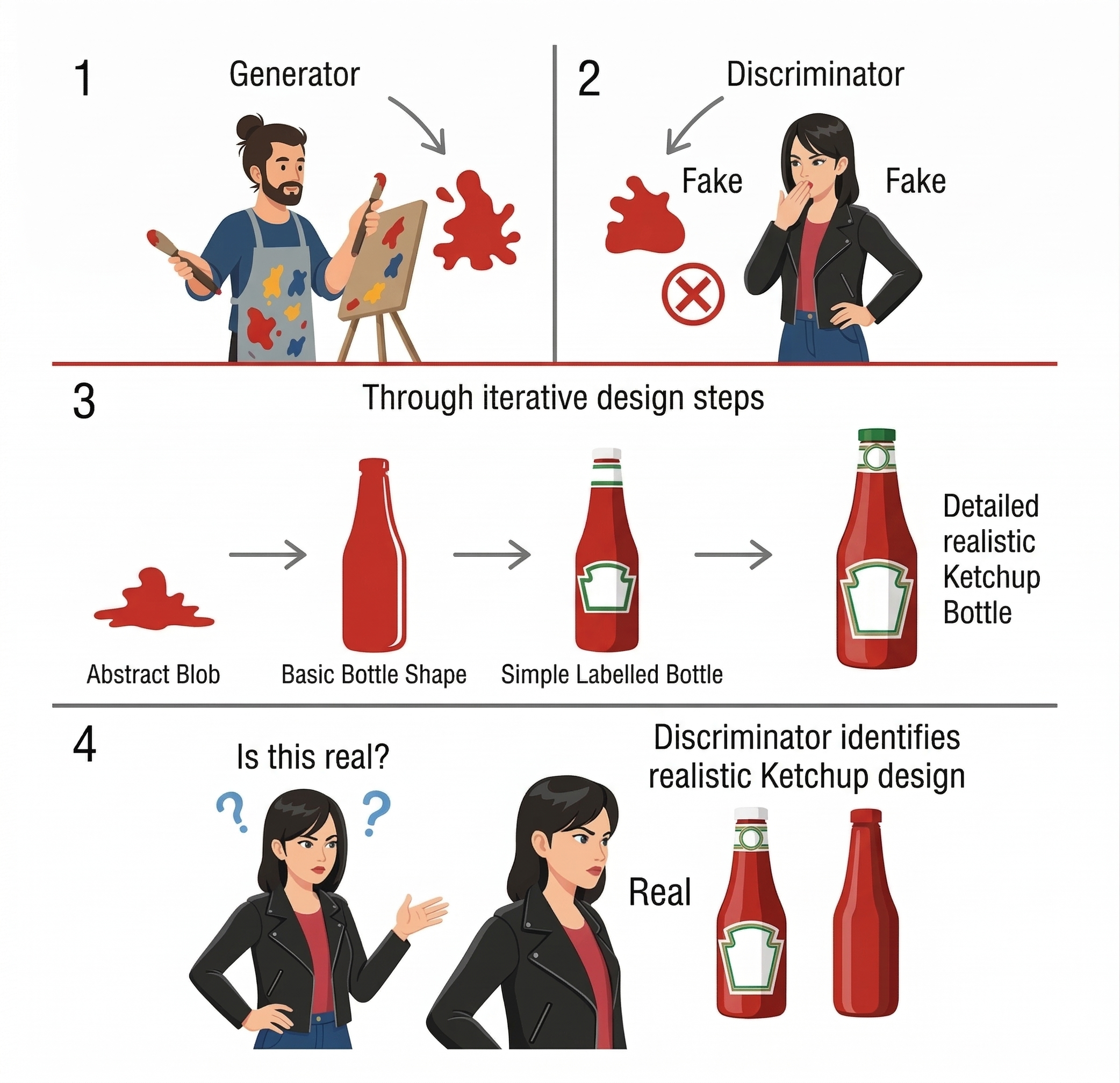

20. GANs: A Creative Duel

Generative Adversarial Networks

Ian Goodfellow's 2014 breakthrough: teaching AI to create by making two neural networks compete against each other!

- Generator (The Forger): Creates synthetic data trying to pass as real.

- Discriminator (The Detective): Tries to spot the fakes from the real deal.

21. The Training Arms Race

How They Improve Together

Round 1: Generator creates a crude blob. Discriminator easily spots it as fake.

After thousands of rounds: Generator gets better at faking. Discriminator sharpens its detection. Eventually, the fakes become indistinguishable from reality!

The Dark Side

- Deepfakes: Frighteningly realistic fake videos that can manipulate public opinion.

- Mode Collapse: Sometimes the generator gets stuck creating variations of the same thing over and over.

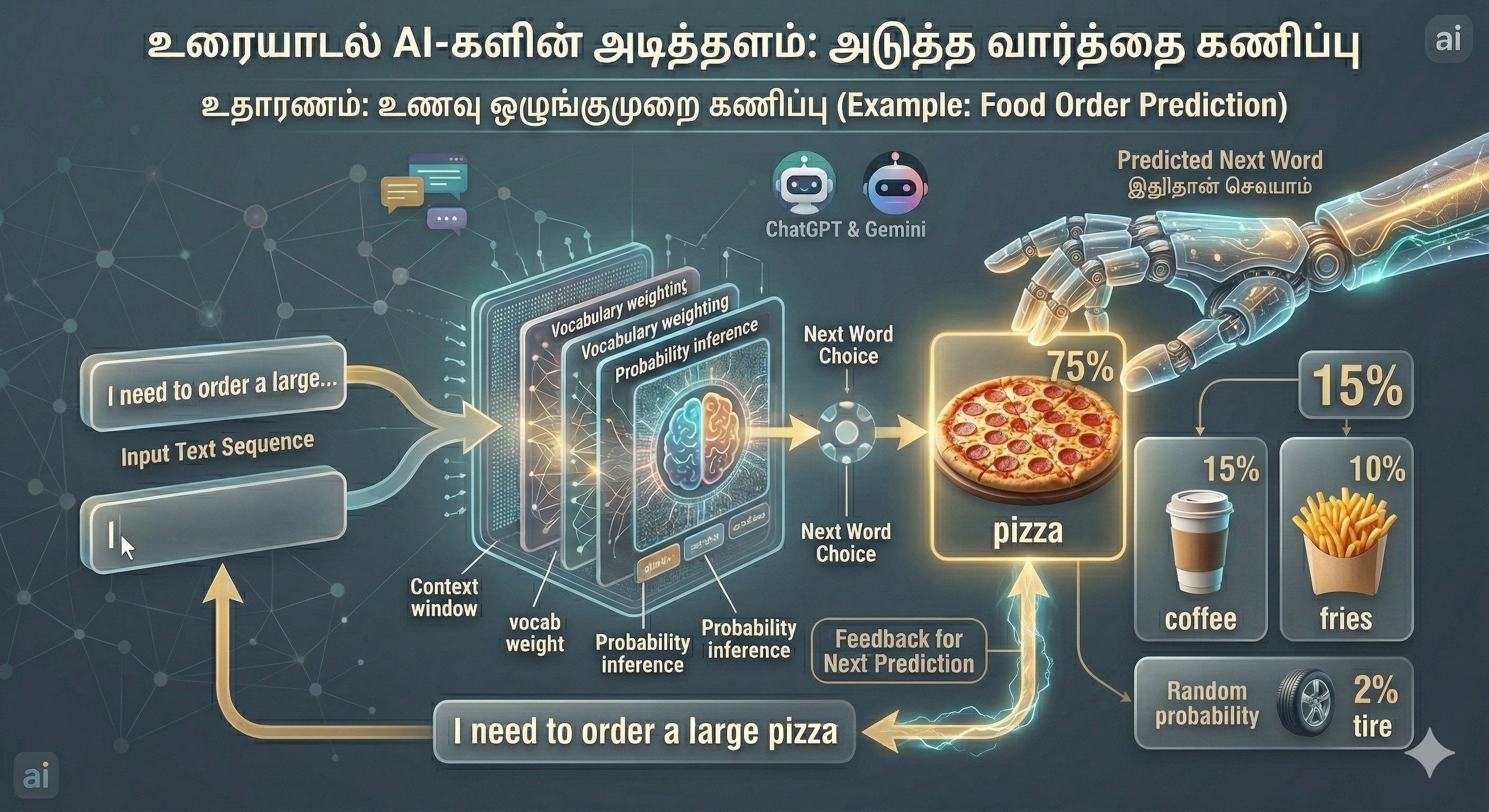

22. Transformers & Large Language Models

The architecture powering ChatGPT, Gemini, and the AI writing revolution.

- Next-Token Prediction: At its core, it's just predicting the next word based on what came before. Simple idea, profound results.

- Attention Mechanism: The breakthrough that lets AI understand context. It learns which words to "pay attention to".

Example: In "The animal didn't cross the street because it was too tired", attention helps determine "it" refers to the animal, not the street.

23. The Miracle of Scale

Emergent Abilities: More Is Different

GPT-3 has 175 billion parameters. Something magical happens at this scale: capabilities emerge that weren't explicitly programmed. The model wasn't trained to reason or translate, yet it does both.

"Because it rained, ____"

Small model: "the streets got wet" (predictable)

Large model: "children ran outside with paper boats while streams began to swell" (contextual understanding + creativity)

What's Next: Natural Language Processing

Language is where AI truly comes alive. All the techniques we've covered—machine learning, deep learning, transformers—reach their full potential when applied to human communication. Next chapter: we dive into the fascinating world of NLP.