Chapter 2:

Layers of Intelligence

Unraveling the Magic Behind AI, Machine Learning, Deep Learning, and Generative AI

Technology Public Lecture

1. Introduction: Untangling the Web

Artificial Intelligence (AI) has become deeply embedded in every aspect of our daily lives. But what's really going on behind the curtain of magic?

The Common Mix-Up:

Most people use AI, Machine Learning, and Deep Learning as if they mean the same thing. While they're intimately related, they're actually quite different.

Let's use a simple analogy to make sense of it all!

2. The Russian Doll Analogy

Think of Russian Matryoshka dolls — those charming wooden dolls that nest inside one another, each smaller than the last.

- Outermost Doll: Artificial Intelligence (AI)

- Next Layer: Machine Learning (ML)

- Deeper Still: Deep Learning (DL)

- At the Core: Generative AI

They're not competing technologies — they're layers of intelligence, each building upon the last.

3. The Outermost Layer: Artificial Intelligence

Artificial Intelligence (AI): This isn't a single technology — it's an umbrella term covering any technique that enables machines to mimic human intelligence.

The Ultimate Goal

Build machines that can think, reason, understand language, and solve problems — sometimes even better than humans can.

4. Two Types of Players

1. Narrow AI

"The Specialist"

Every AI system we interact with today is narrow AI.

- Masters of a single domain or task.

- A chess-playing AI can't make you coffee — it only knows chess!

- Think of it as: A world-class specialist in one field.

2. General AI (AGI)

"The All-Rounder"

The holy grail of AI research — still theoretical.

- Could learn and adapt to any task, just like humans do.

- Solve math problems in the morning, write poetry at lunch, and counsel patients in the afternoon.

- Think of it as: A true polymath — skilled across every domain.

5. Two Paths of AI (Not Enemies, Partners)

| School 1: Symbolic AI | School 2: Machine Learning |

|---|---|

| "Strict Teacher Method" | "Smart Student Method" |

| Explicitly program human knowledge as rules into the system. | Skip the rules — feed it data and let it figure things out. |

| IF patient has fever THEN give Paracetamol (Expert Systems). | Show thousands of images and teach "this is a cat". |

| Provides clear structure & logic. | Provides flexibility and endless learning capability. |

6. AI in the Art World: A Debate

Théâtre d'Opéra Spatial

In 2022, the painting that won first prize at the Colorado art exhibition wasn't created by a human, but by an AI called Midjourney.

Questions it raised:

- Can we call an AI-created work 'art'?

- Who is the true artist?

- Is creativity exclusive to humans?

Second Doll:

Machine Learning

This isn't just a technology; it's a revolutionary that tore up the 'Old Testament' of computing and wrote a 'New Testament'!

7. The Paradigm Shift: Rules vs Learning

1. Traditional Programming (The Old Way)

Like following a strict cookbook. The computer executes instructions but can't improvise.

Example: Website tax calculation.

2. Machine Learning (The Revolution)

Here's where it gets interesting — we flip the entire equation!

The system learns to create its own rules! Example: Teaching a computer to recognize cats.

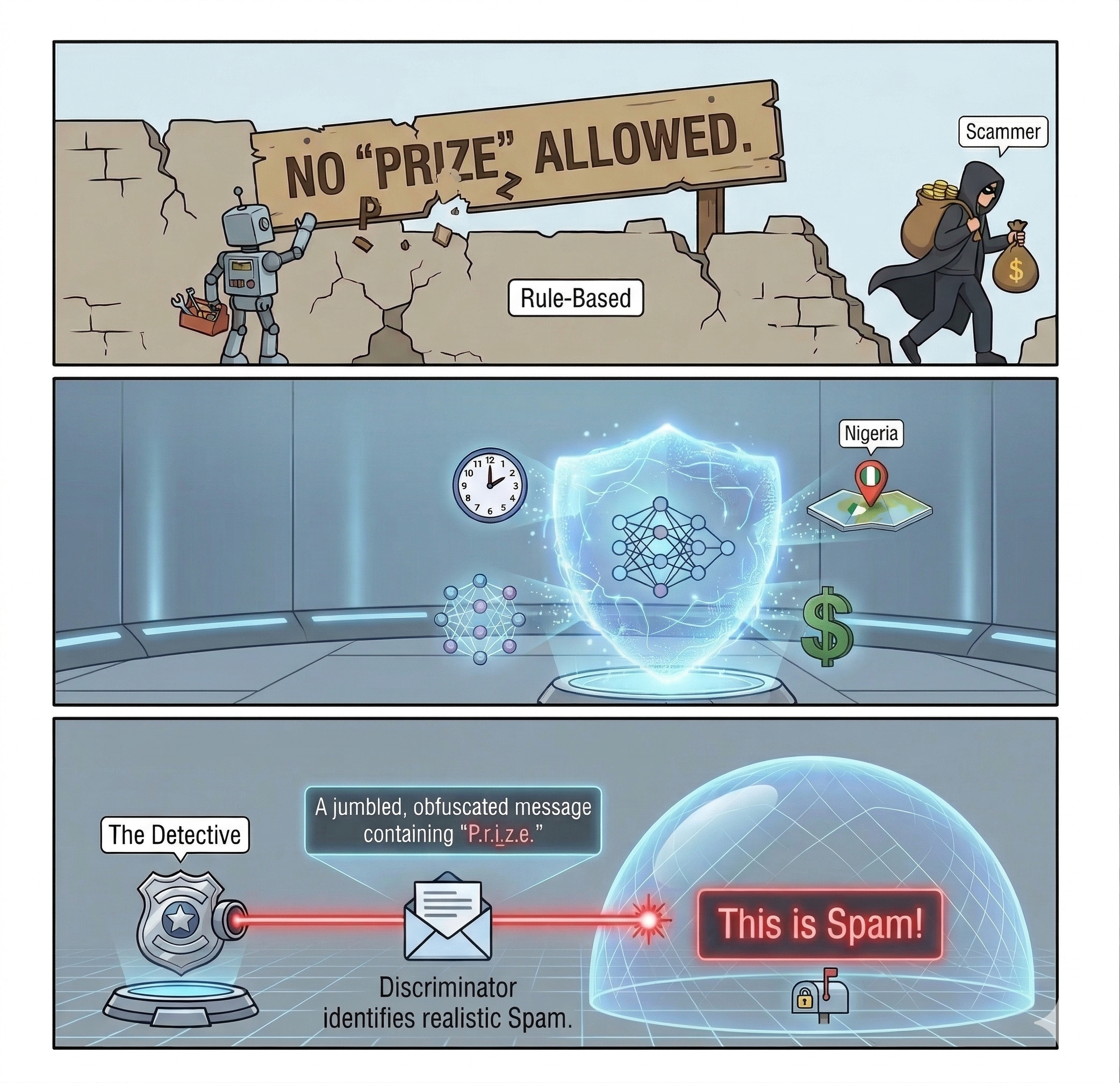

8. Real World Example: Spam Detection

The Traditional Approach Fails: Create a rule like "block emails mentioning 'prize'" and scammers simply write "pr!ze" or "p-r-i-z-e" to bypass it.

The Machine Learning Advantage:

- "This person has never sent you an email before."

- "This email came from Nigeria at 2 AM."

- "There are lots of dollar signs ($$$) in the subject."

It synthesizes these patterns into a decision: "This looks like spam!"

9. Three Main Paths of Learning

1. Supervised Learning

Learning through a teacher (Labelled Data).

2. Unsupervised Learning

Self-discovery method (Unlabeled Data).

3. Reinforcement Learning

Learning from trial and error.

10. Supervised Learning: Learning with a Teacher

Think of this as learning with answer keys. You provide both the data and the correct answers (labels).

How It Works:

- Training Phase: Feed it 10,000 labeled images: "cat", "dog", "bird"...

- Pattern Recognition: The system identifies subtle features (ear shapes, fur patterns, etc.)

- Testing: Show it a new image → "95% confident this is a dog"

Technical Note:

Garbage In = Garbage Out. If your labels are wrong, the answers will be wrong too!

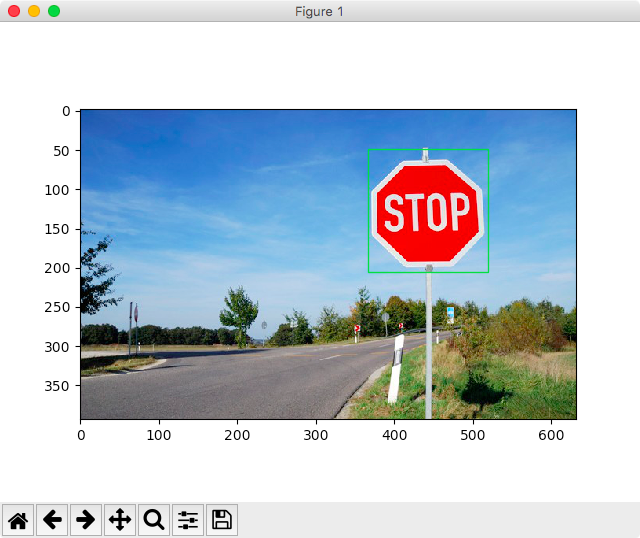

11. The Hidden Labor Behind AI

Here's the dirty secret: The hardest part of supervised learning isn't fancy algorithms — it's the tedious human work of data labeling.

Example: Autonomous Vehicles

Teams of people manually draw bounding boxes around pedestrians, cars, and traffic signs in millions of video frames. It's painstaking work.

Behind every "smart" AI is an army of human labelers, often working through platforms like Amazon Mechanical Turk.

12. Unsupervised Learning: Finding Patterns Alone

No labels, no answers — just raw data. The system must find patterns on its own.

Example: Retail Store Data

Unlabeled data like purchased items, purchase frequency, amount spent.

What Happens:

- The AI automatically discovers customer segments with similar buying patterns (using techniques like K-Means Clustering and RFM Analysis).

13. Business Magic: 3 Groups AI Identifies

1. VIP Customers

Identity: Frequent visitors, big spenders.

Strategy: No discounts needed; give recognition and exclusive relationship manager (VIP Status).

2. Discount Hunters

Identity: Only come when there's a sale.

Strategy: VIP perks are wasted. Send flash sale announcements like "50% off today only".

3. New Buds

Identity: First-time buyers.

Strategy: Warm welcome discount and loyalty program to convert them into loyal customers.

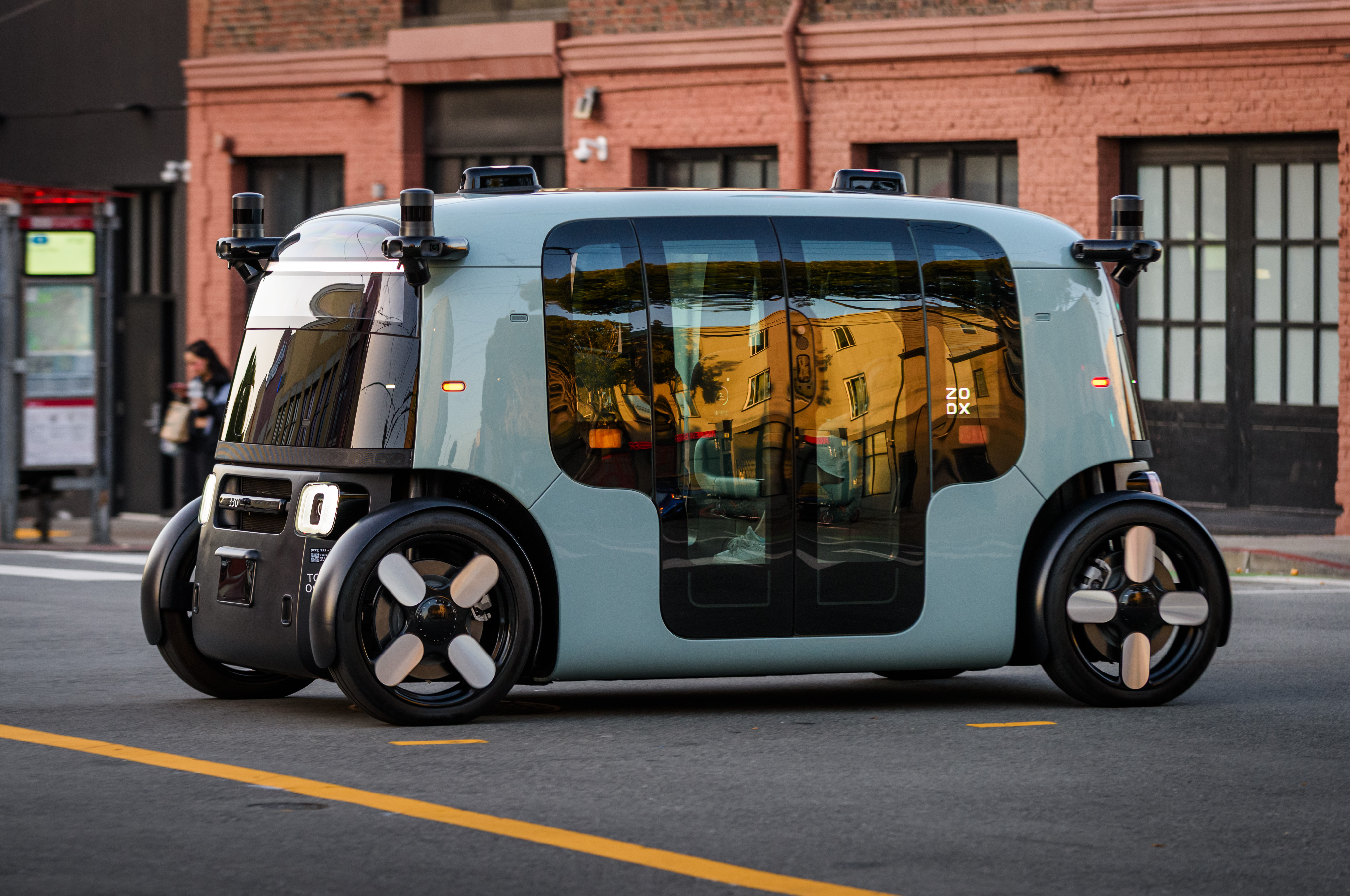

14. Reinforcement Learning: Trial, Error, Reward

The AI learns through experience — like a child discovering that touching a hot stove hurts. Every action has consequences.

Example: Autonomous Taxi

- Drop off passenger safely → +10 points

- Run a red light → -100 points

- Through millions of simulations, it learns which behaviors maximize rewards.

15. A Historic Moment:

AlphaGo's "Move 37"

March 2016. Google's AlphaGo played its 37th move against world champion Lee Sedol. Every expert watching called it a blunder — a move that violated centuries of Go strategy.

They were wrong. Move 37 was brilliant — it secured AlphaGo's victory and changed Go forever. This was the moment we realized: AI can discover strategies beyond human imagination.